Services

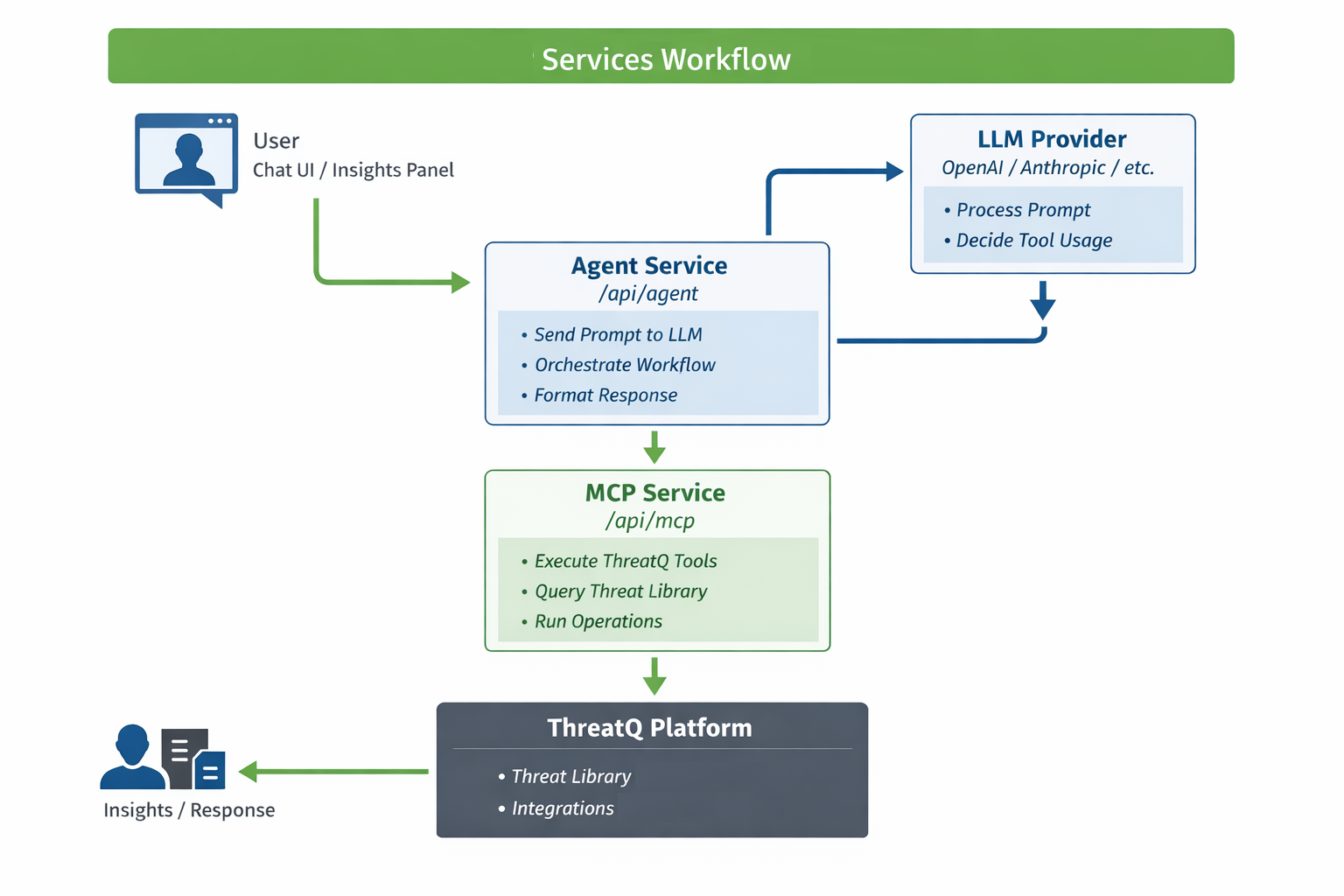

The Threat Research Agent framework in ThreatQ enables AI-driven agentic workflows by connecting large language models (LLMs) with platform capabilities. It is composed of two primary services, the MCP Service and the Agent Service, which work together to provide intelligent automation, enrichment, and analysis.

MCP Service (/api/mcp)

The MCP Service serves as the execution layer and functions as the tool provider for the agent. It exposes ThreatQ operations and capabilities as structured, callable tools that the agent can invoke. Acting as a bridge between the Agent Service and the ThreatQ platform, it enables access to Threat Library queries as well as configured operations.

This service does not interact with a large language model (LLM). Instead, it is responsible for executing requests in a controlled and deterministic manner, ensuring consistent and secure interaction with ThreatQ functionality.

Agent Service (/api/agent)

The Agent Service interfaces with a configured LLM provider such as OpenAI, Anthropic, Gemini, or Ollama. It serves as the reasoning engine that processes natural language input, determines appropriate actions, and orchestrates the use of MCP tools to execute tasks within the ThreatQ platform.

Powered by an LLM, the Agent Service interprets user intent, decides when and how to invoke available tools, and can autonomously execute multi-step workflows. It then returns structured, human-readable insights and results.

How the Services Work Together

A typical workflow looks like this:

- User submits a prompt via the Agent UI (chat or operation)

- Agent Service sends the prompt to the LLM

- LLM determines that a tool/action is needed

- Agent calls the MCP Service with the appropriate tool

- MCP Service executes the tool in ThreatQ

- Results are returned to the Agent

- Agent formats and presents the final response to the user